Pinnacle and Shor's

by Sebastian Hassinger

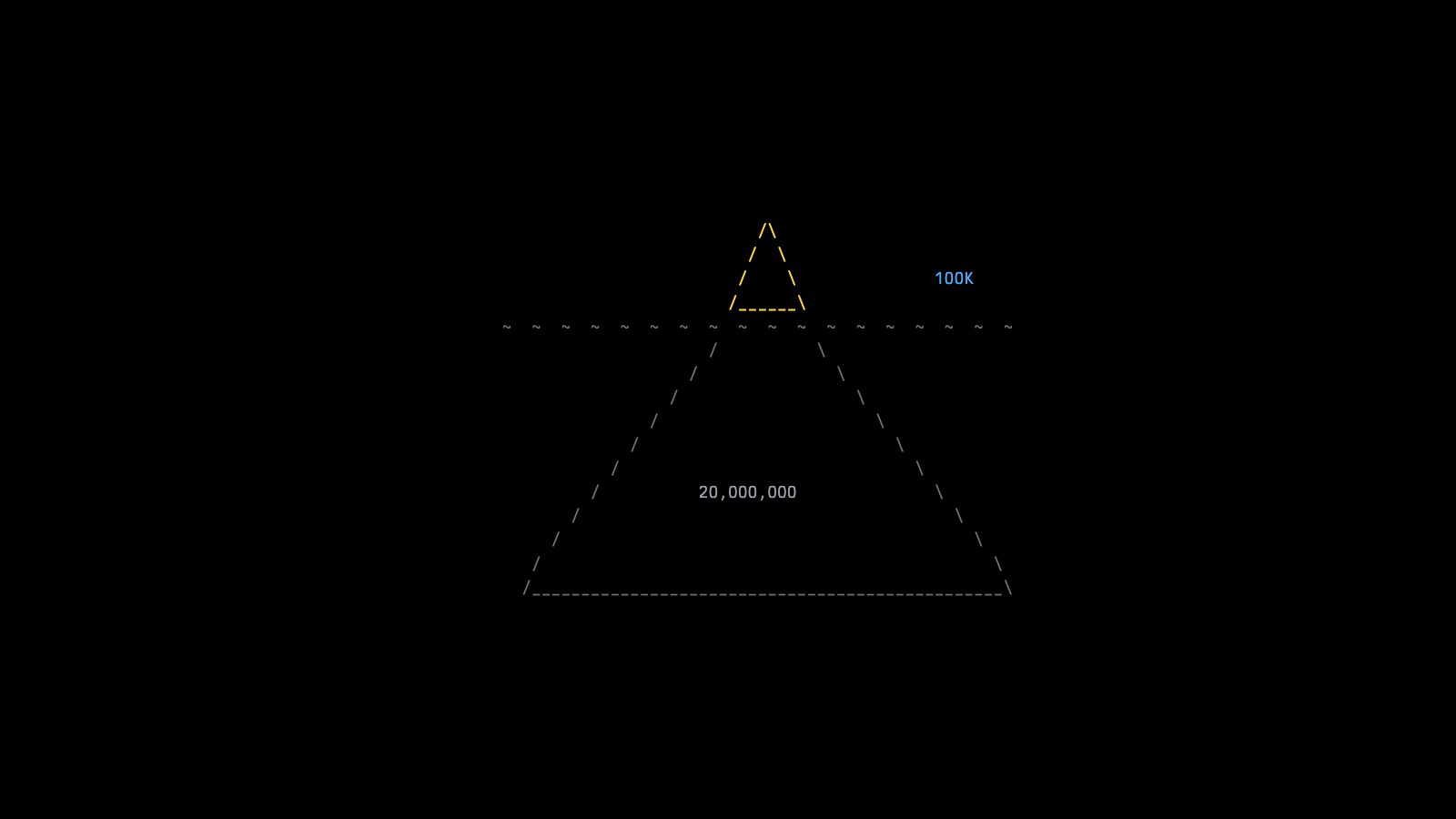

Iceberg Quantum launched with a theoretical result that turned heads: cracking RSA-2048 with 100,000 physical qubits.

Pinnacle, Shor's, and the Road from 20 Million to 100,000 Qubits

Iceberg Quantum is a very new startup — spun out of the University of Sydney, where co-founders Felix Thomsen (CEO), Larry Cohen (CSO), and Sam Smith (CTO) all did their PhDs under Stephen Bartlett, one of the world's leading experts in quantum error correction. They made quite a splash with their launch, because they didn't just announce a company — they announced a result.

Their error correction framework, which they call Pinnacle, estimates that fewer than 100,000 physical qubits could factor RSA-2048. To appreciate why that number landed like a thunderclap, you need some context.

What Pinnacle Actually Is

Pinnacle is a full fault-tolerant quantum computing architecture built using quantum LDPC codes — specifically, generalized bicycle codes — rather than the surface codes that have been the default assumption in the field for years. LDPC stands for low-density parity-check. The classical version was invented by Robert Gallager at MIT in 1960, largely ignored for decades because the hardware of the era couldn't handle the iterative decoding, and then rediscovered in the mid-1990s. The quantum adaptation, QLDPC, is now having its moment.

The key advantage is efficiency. A surface code encodes a single logical qubit in hundreds of physical qubits. Pinnacle's generalized bicycle codes encode 14 logical qubits in 860 physical qubits — roughly 61 physical qubits per logical qubit, compared to 500+ for surface codes at similar error distances.

Iceberg isn't alone in pointing toward QLDPC. IBM's bivariate bicycle code paper and their forthcoming biplanar chip are QLDPC implementations. Google's Willow chip, by contrast, demonstrated surface-code error correction. What distinguishes Pinnacle is that it's a more complete architectural framework — not just a code, but a system for computation: modular processing units, "magic engines" for universal gate operations, shared memory, and a technique called Clifford frame cleaning that enables flexible parallelism across processing units.

Important caveat: this is a theoretical resource estimate, not an experimental demonstration. Nobody is breaking RSA today. The Iceberg team is the first to say so — they even changed the paper's title after Scott Aaronson warned them that "Breaking RSA-2048 with 100,000 Qubits" would be misread as a claim they'd actually done it.

Why RSA-2048 Captures the Imagination

There's a reason this particular benchmark gets people's attention. Shor's algorithm is one of the things that put quantum computing on the map. You don't have to explain what a Hamiltonian is, or why simulating a molecule matters. Everyone understands encryption, and everyone understands what it means to break it.

RSA-2048 sits in the middle of the encryption spectrum. RSA-1024 is the lightweight option — SSL, on-the-wire encryption. RSA-4096 is the heavy-duty choice for high-security applications. RSA-2048 is the workhorse: widely deployed, considered completely secure against classical attack. Using the best known classical algorithm — the general number field sieve — factoring an RSA-2048 key would take roughly 300 trillion years. That's not "difficult." That's "the sun will have burned out many times over."

Shor's algorithm changes the calculus entirely. It exploits quantum phase estimation to find the prime factors of very large numbers exponentially faster than any classical method. Which is why, since 1994, it has been the benchmark that everyone in quantum computing keeps coming back to.

The Origin of Shor's Algorithm

Peter Shor was working at Bell Labs in the spring of 1994. He'd been looking at Daniel Simon's algorithm, which exploited periodicity over a binary vector space, and thought: discrete logarithm is a famous unsolved problem that also involves periodicity — maybe I should try that. He found a quantum algorithm for discrete log. And as he told me, within a week or two, he'd extended it to factoring — possibly at the suggestion of his colleague Jeff Lagarias, since historically, whenever someone found an algorithm for discrete log, a factoring algorithm followed shortly after.

On a Tuesday in April, Shor presented the discrete log result in Henry Landau's weekly seminar at Bell Labs. He hadn't figured out factoring yet. By the following weekend — just four days later — Umesh Vazirani called from Berkeley: "I hear you have a factoring algorithm with a quantum computer. Tell me about it."

As Peter tells it: thankfully, in those four days between the seminar and Vazirani's call, he had in fact cracked factoring. So he was able to answer, truthfully: yes, I do.

The immediate reaction from much of the physics community was: great algorithm, but so what? Quantum coherence is fleeting. These devices are impossibly noisy. You'll never have a system stable enough to run a long calculation with any precision — it'll collapse into random noise.

Almost exactly a year later, Shor published a second landmark paper — this time on quantum error correction. He started with the nine-qubit code, a construction that was intellectually distinct from the factoring work. He then generalized it, together with Robert Calderbank, into what became CSS codes (Andrew Steane independently arrived at the same generalization). And then he turned CSS codes into a full fault-tolerant protocol.

The conventional wisdom had been that quantum error correction was fundamentally impossible, largely because of the no-cloning theorem: you can't copy a quantum state without destroying it. Shor's insight was to spread the quantum state of a single data qubit across an ensemble of physical qubits — a logical qubit — and then measure ancillary qubits coupled to the data qubit. You may destroy the state of the ancilla in reading it out, but that destructive measurement tells you whether the data qubit has been affected, without touching the data qubit's state directly.

So both quantum factoring and quantum error correction owe their origin story to the same person. And Pinnacle — a QLDPC error correction framework applied to Shor's factoring algorithm — is, in a sense, Peter Shor arguing with himself across three decades.

The Intelligence Community Takes Notice

Of course, when a mathematician publishes a theoretical method for breaking encryption, certain government agencies pay attention. There's an anecdote — which I've heard firsthand from one of the principals involved, though I've never gotten explicit permission to name names — about one of the earliest quantum hardware experiments. The PIs were visited by a figure who held a position at MIT but was affiliated with the National Security Agency, which in those days was still "the agency that shall not be named."

He asked a lot of questions in a long meeting, and at the end said, essentially: we don't have a mechanism to fund you, but if you send me an email telling me how much you need, I'll send you a check.

That, as far as I know, is how the first quantum hardware research got funded by the federal government.

From 20 Million to 100,000

For a long time, the serious resource estimate for factoring RSA-2048 on a quantum computer was around 20 million noisy physical qubits — the number from Gidney and Ekerå's 2019 paper. That number stood for years.

Then in 2025, Craig Gidney published a paper collecting algorithmic optimizations — some proposed by others, some his own — and estimated that about one million physical qubits could do the job. That was a 20x improvement, and it made a significant splash.

Now Iceberg claims fewer than 100,000 with Pinnacle. That's another order of magnitude.

And here's the thing that makes this more than an academic exercise: 100,000 qubits is no longer a fantasy number on the hardware side. IBM's public roadmap targets 100,000 qubits by 2033 with their Blue Jay system. That's seven years away. When the resource estimate was 20 million qubits, the question of "when can we actually build this?" felt safely abstract. At 100,000, it starts to rhyme with what hardware companies are already planning to ship.

One thing I've learned from hanging around physicists: to really get their attention, you need to move the decimal place. They think in orders of magnitude. An improvement of 3x or 5x is nice. An improvement of 10x makes people sit up. And if you look at the history of qubit error rates, coherence times, gate fidelities — the real inflection points in the field have always been when someone delivers an order-of-magnitude leap. Pinnacle claims to be that, relative to Gidney's result.

The improvement comes from two sources. First, the QLDPC architecture itself: far better physical-to-logical qubit ratios than surface codes. Second, Iceberg parallelized Gidney's factoring algorithm by sharing the large input register across multiple working registers via read-only memory — the key insight being that lookup operations commute, so you don't need to duplicate the big register for each parallel stream. It's a clever trick that trades sub-linear space overhead for orders-of-magnitude runtime reduction.

The ARM for Quantum Computing

I'll admit I was initially surprised that a theoretical paper was the vehicle for launching a startup. Algorithmic improvements alone don't usually make a company. But talking with the Iceberg team, their ambition makes sense.

Their model is ARM, a company which doesn't manufacture chips or even market them. They design architectures and license them to companies like Apple (M-series, A-series chips), Qualcomm (Snapdragon), and Amazon (Graviton), who then have firms like TSMC fabricate the actual silicon. ARM's value is that chip design is so extraordinarily complex — an Apple M4 contains 28 billion transistors, and if you've ever seen die photography of a modern chip, it looks like an overhead view of a very large city — that starting from scratch would take you roughly the age of the semiconductor industry.

Iceberg is betting the same dynamic will play out in quantum computing. Right now, quantum error correction is still in its early stages, but the complexity is already staggering. Google's Willow chip uses 105 physical qubits to make one logical qubit. Now imagine coordinating 100,000 physical qubits — or a million — with the syndrome extraction, decoding, and real-time classical control that fault tolerance demands. You're talking about engineering at nanosecond timescales on nanoscale devices. For atom-based qubits, you're controlling individual quantum particles. For superconducting qubits, you're managing ensembles of electrons behaving as a single quasi-particle. The fabrication, design, and operational tolerances are extraordinarily tight.

Today, Iceberg is doing co-design: collaborating with hardware partners like PsiQuantum (photonics), IonQ (trapped ions), Diraq (spin qubits), and Oxford Ionics (trapped ions) to optimize fault-tolerant architectures for each platform. They're building hardware-agnostic theoretical expertise through their research while getting platform-specific practical experience through these partnerships. The licensable IP library comes later, as the systems mature. They just raised a $6 million seed round led by LocalGlobe, with Blackbird and DCVC.

It's a reasonable play. Speed matters in this race — first logical qubits shipped, first non-classically-simulatable system, first practical result. If you're a hardware company trying to get there, having an architecture partner who's already solved the error correction design problem could be the difference between first and fifth.

Three Peter Shor Stories

I'll close with the anecdotes, because they're too good not to share. I interviewed Peter in April 2024, on the 30th anniversary of his algorithm, and he told me these stories himself.

The Adleman Introduction. Just a few weeks after word of the factoring algorithm got out, Shor was invited — at the very last minute — to give a talk at the first Algorithmic Number Theory Symposium at Cornell, in early May 1994. They made room in the program for an invited talk, and he flew up.

He was introduced by Len Adleman. That's the "A" in RSA — one of the three inventors of the very encryption scheme that Shor's algorithm threatens. And for the movie buffs: Adleman was also a technical advisor on the 1992 film Sneakers, starring Robert Redford, in which the plot revolves around a device that can crack any code. The film came out just two years before Shor's algorithm. Art anticipating life.

Adleman put up a slide of Leonardo da Vinci's sketch for a flying machine, and then a slide of Charles Babbage's analytical engine. He pointed out that da Vinci's flying machine had severe aerodynamic problems — it never would have worked. Babbage's computer, on the other hand, would have worked if he'd been able to machine the parts precisely enough. "We don't yet know which one of these this is," Adleman told the audience, "but it's very interesting."

As Peter told me: "These are the two most famous people I've ever been compared to in an introduction."

(By 2001, Chuang and Vandersypen had run at least a simplified version of Shor's algorithm to factor 15 on a 7-qubit NMR device — tilting things toward the Babbage end of the scale. It just needs the precision tooling.)

The April Fools' Post. Peter told me he doesn't know the exact day in April 1994 he cracked factoring, but he knows it was April — because of a post he'd read on Usenet. (For those too young to remember: Usenet was basically Reddit before Reddit. Forum-style discussion boards, with an elaborate tradition of April Fools' posts.)

On April 1st, 1994, someone on Usenet posted a joke about a Russian computer that uses quantum mechanics to factor large numbers. As Peter described it: "The computer chose two random numbers and multiplied them together, and if this wasn't the factor, it exploded a nuclear plant. So by the many-worlds hypothesis, any world in which the bomb did not explode, the computer factored."

There are, as I noted to Peter, some ethical problems with testing that solution. But the logic is sound.

Peter remembered reading that post just before his breakthrough. Which means Shor's algorithm was born in the days immediately following what may be the greatest cypherpunk shitpost of all time.

Where We Are on the Clock. I asked Peter where he thought quantum computing stands relative to the history of classical computing. His answer surprised me: "We're not even in the 1950s. We're in the 1930s and '40s." His point was that the early classical algorithms — linear programming, Monte Carlo methods — were discovered by people who could experiment with actual computers. We're not there yet in quantum. Theory is still out ahead of experiment. As he put it, the simplex algorithm for linear programming was used for years before anyone could prove it worked — "they knew it worked because you programmed it up and you ran it." A satisfactory proof didn't arrive until Spielman and Teng's smoothed analysis around 2000.

When I asked him what the field looks like in another 30 years, he said: "We'll have large-scale quantum computers that are running and solving practical problems. Certainly simulating quantum mechanics will work. There'll probably be some that are highly classified — used for cracking codes. And what else? I don't know."

Which is, honestly, part of the fun.

This post accompanies Episode 81 of the New Quantum Era podcast, featuring Larry Cohen and Paul Webster of Iceberg Quantum.

Written by

Sebastian Hassinger